How does reactivity work in Java and Project Reactor? Key concepts, pitfalls, and when to choose virtual threads instead.

Reactive programming in Java has been covered in countless articles and even thick tomes, but this isn’t another A-to-Z compendium.

Let’s start with the bare essentials I wish I’d known before immersing myself in this world. I will show you the difference between the thread-per-request model and the reactive approach, tell you about a few mistakes we made ourselves, and, most importantly, point out the pitfalls that await beginners.

This will give you not only the theory, but also a real insight into what can actually go wrong. And finally, we will consider together whether reactivity still makes sense in the age of virtual threads.

Let's get started!

Inside the Traditional Thread-Per-Request Model

In the classic, intuitive approach, each request gets its own thread. It's simple, clear, and has worked great for years. The thread has the full context of the request, and you usually don't have to deal with synchronization. And even if you do, Java has some pretty good tools for that.

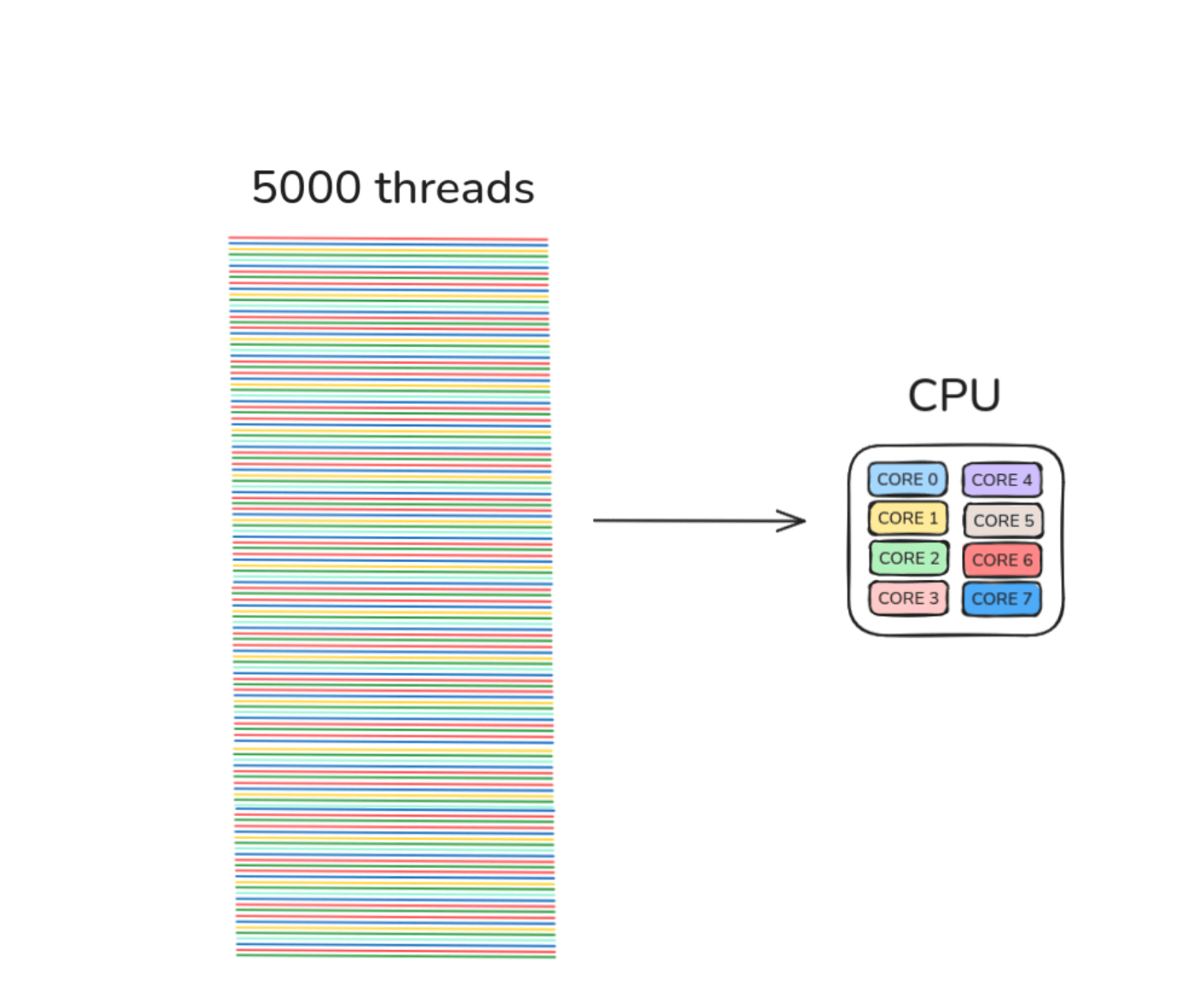

But... as is usually the case, the devil is in the details. Each such thread is a system thread, so it requires specific resources. To simplify, about 1 MB of memory per thread. If the system is able to comfortably maintain, say, 5,000 threads, then you know that anything above that starts to be problematic.

Now combine this with the fact that you have, for example, an 8-core CPU:

5,000 threads are competing for 8 seats at the table.

A few of them will get CPU time, but the vast majority will be queued. There is the cost of context switching, slowdowns, and the classic “why does everything throttle at 2k req/s when we have so many threads?”

That’s when the search for alternatives began, and that’s where reactive programming comes in.

Reactivity: What It Really Means

A Different Way of Thinking

To understand reactivity, you need to put the imperative approach aside for a moment. Here, your code doesn't say “do this now,” but rather “when X happens, do Y.”

If you've ever programmed a UI, this mindset won't be new to you: clicking a button, receiving a message, a change in temperature—these are all events that you react to. And until you subscribe to a given event stream, your code won't even start.

The same goes for HTTP, data streams, or anything else.

Event Loop

In the reactive approach, we have a small, precisely selected pool of threads that handle the event loop. There can be as many of them as there are CPU cores, sometimes a little more. And they handle all requests.

Why limit the number of threads so drastically? Because the fewer you have, the less context switching there is and the easier it is to use the CPU to its full potential.

Netty is a good example of a server operating in this model. If you ever want to dig deeper, it's definitely worth reading about what's going on underneath.

Non-Blocking Operations

It is crucial that the event loop never performs blocking operations. No IO: neither to the database, nor to the disk, nor over the network.

Why? Because the processor works fast... until it has to wait. And IO operations are like standing in line at 5 p.m. on a Saturday at the supermarket. You may have several cash registers (threads), but if someone is making a huge purchase, the whole line comes to a standstill.

In a reactive application, there can be thousands of such “customers” and only 16 cash registers. A few blocking operations are enough to bring the entire architecture to a standstill.

The solution? If you need to perform IO, do it on a separate thread pool. That way, you don't block the event loop and the world goes on.

Other Advantages

Reactivity is not just about better CPU utilization. It also offers great opportunities for data stream processing.

Example: instead of waiting for the entire list of objects, you process items on the go as soon as they arrive. You save memory and gain time.

You might say, “You can do that with threads.” Sure, you can—but why bother? In reactivity, the API for stream operations is convenient, clear, and rich: stream merging, buffering, error handling, backpressure... everything is in place, without manual synchronization.

In the world of concurrency, that's really a lot.

My Experiences with Reactivity

At XTB, we use a reactive approach in many applications, mainly based on Project Reactor. Over the past three years, I have written quite a few microservices in Micronaut using Reactor. And let me be clear: the beginnings are difficult. Testing is even more difficult. Debugging? That's an extreme sport altogether.

Flow can get lost between threads, and what you think will happen... often doesn't happen.

I've seen and experienced a lot of bugs resulting from Reactor's non-obvious behavior. I'll talk about a few of them below - just as a warning.

How to Get Started with Project Reactor?

First of all, start by reviewing the educational materials at https://projectreactor.io/learn. It's a really solid starting point - especially if you prefer to learn from short, specific examples rather than drowning in abstractions. Among the materials, you will find, among other things, a repository with practical exercises: https://github.com/schananas/practical-reactor

After completing them, you should have a basic knowledge of Reactor that will allow you to start working on new functionalities.

If you are looking for more advanced materials, there is a great tech talk on YouTube that describes in depth how Reactor works:

At this stage, you may think you already know a lot, but when it comes time to write your own tests... you may find that the program works completely differently than you imagined. Testing a reactive application is very important. Especially if you are a beginner.

What if there is an error in the stream? Will the subscriber retry? What if the backpressure is overflowing? What if different threads simultaneously emit different values to the sink?

And so on...

If you notice that the program is working differently than you expected, finding the cause and solving it can be a big challenge. Debugging is made difficult by the fact that the entire flow can be scattered across different threads. Sometimes you really have to dig deep into the documentation.

Common Mistakes and Pitfalls in Reactive Programming

Once you learn the basics and play around with simple examples, you will very quickly encounter situations where “something is wrong.” Reactor is powerful, but it can be counterintuitive. That's why I've collected a few pitfalls below that are easiest to fall into at the beginning.

Incorrect Use of flatMap

Disturbed Order

This is a classic mistake. We have a chronological stream of events:

Flux<Event> stream = ... // EVENT_1, EVENT_2, EVENT_3...

And a function:

Mono<EventDetails> fetchDetailsFor(Event event) { … }

It is tempting to write:

stream.flatMap(event -> fetchDetailsFor(event)).subscribe(...)

What's wrong with that?

flatMap works concurrently – it fires queries concurrently and does not guarantee order. The final result may look like this:

EVENT_3 → EVENT_1 → EVENT_2

If the order is important, you need to use concatMap or flatMapSequential.

“Starved” Streams

Second scenario: we combine ticks from multiple currency pairs (i.e., ticking exchange rates):

Flux<CurrencyPair> currencyPairs = getAllCurrencyPairs();

Flux<Tick> ticks = currencyPairs.flatMap(cp -> listenToTicks(cp));

Sounds innocent, but flatMap has a default concurrency = 256. If you have 500 currency pairs (or more – and there are theoretically 32k of them), most streams will never see demand (request(n)), so they will never emit anything.

Want them all? You need to set concurrency ≥ the number of streams.

Incorrect Use of Sinks

Sinks are great—until you emit an error. Consider:

while (true) {

try {

sink.emitNext(calculateScore(), FAIL_FAST);

} catch (...) {

sink.emitError(e, FAIL_FAST);

}

}

At the moment of emitError, the sink is already dead. Every new subscriber will immediately get an error. No retry, no new data - nothing. Such a sink is only good for replacement.

The second pitfall is autoCancel = true. If the last subscriber unsubscribes, the sink closes. And it no longer emits anything. This can be surprising.

Incorrect lLinking of a Snapshot with a Change Subscription

We want to have:

- the current snapshot,

- and then the current changes.

The code seems fine:

Mono<Portfolio> snapshot = Mono.defer(() -> readPortfolioSnapshot());

Flux<Portfolio> changes = Flux.defer(() -> listenToPortfolioChanges());

return Flux.mergeSequential(snapshot, changes);

It looks good, but there is a problem: the minimum delay between subscriptions can “lose” updates that occur between retrieving the snapshot and subscribing to changes.

The documentation says “eager subscription,” but according to an issue on GitHub, demand for subsequent sources is only reported after the previous ones have been completed.

The solution? Don't use defer for changes:

Mono<Portfolio> snapshot = Mono.defer(() -> readPortfolioSnapshot());

Flux<Portfolio> changes = listenToPortfolioChanges();

return Flux.mergeSequential(snapshot, changes);

The snapshot should be lazy – the stream of changes should not.

Does Reactivity Still Make Sense with Virtual Threads?

With the advent of virtual threads, the thread-per-request approach has been given a second life. Threads are lightweight, fast, and can be created in the thousands (because they do not reflect system threads 1:1). It sounds like “problem solved, let's go home.”

But the laws of physics still apply. A million virtual threads performing intensive calculations will not be faster than 8 cores. IO? Yes – this is where virtual threads shine, because they give the CPU to others while waiting. But they allocate memory all the time, they exist in the eyes of GC, so scale matters.

So what? Reactivity again?

In my opinion: it depends.

If you handle regular request–response, virtual threads are a great choice today. It is also worth mentioning structured concurrency, which further simplifies the classic approach. However, if you have data streams on which you perform a lot of operations, reactivity still has real advantages, especially when combined with server-side streaming (e.g., gRPC).

Summary

By now, you have a solid understanding of what reactivity is and when it makes sense to use it. We’ve also walked through some pitfalls we encountered during development. Hopefully it will save you both time and a few headaches along the way.

If you work in a stream-based environment, reactivity can be a powerful tool. But in most cases, you don't need to resort to it as virtual threads can really simplify your life.

If this topic sparks your interest or you have your own experiences (or war stories) to share, feel free to get in touch. You can find me on LinkedIn.